How do you get decent, optimized lighting in WebXR? In WebXR, every ounce of optimization matters, so baking static lights is a must to achieve a smooth running experience. The problem is, traditional game engines like Unity usually provide the tools that make baking easy, so for us brave WebXR developers, we are mostly on our own… ( Unless you are trying to use the Unity exporter, in which case … good luck) . Real-time lighting is very expensive to render, so you need alternatives to get an effective atmosphere without losing a lot of performance in the process.

Types of Bakes

There are three common methods for getting optimized lighting in your WebXR scenes:

- (combined) texture baking

- baking light maps

- vertex baking

Each of these methods have their pros and cons, which I have painfully learned throughout many months of trial and error. This article is meant to be a jumping off point to other, more in-depth articles I’ll be writing on the subject (keep an eye out for those on our blog page ).

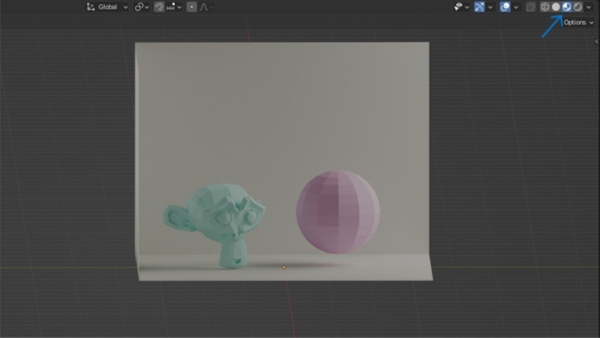

(Combined) Texture Baking

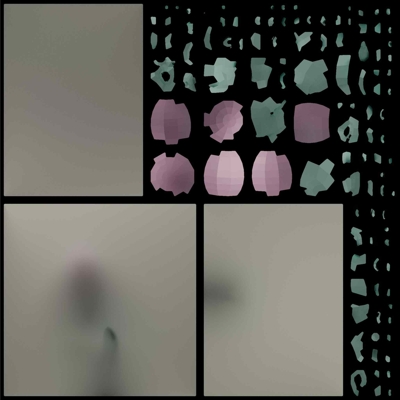

Combined texture baking is a texture image created from the data on the UVs of your objects. It “bakes” the diffuse color on the UVs, plus lighting information. The result is something like this:

Note that we are indeed in material preview mode with no real-time light data coming through.

All lighting and color information is transferred onto a single image.

Benefits

- It is one of the few ways you can get procedural nodes to “export” from Blender.

Problems

- All object UVs need to be unwrapped and well-organized onto the texture.

- Images are more expensive to render (though less expensive than dynamic lighting).

- It is only as detailed as the resolution is.

- You cannot unwrap very complex or large scenes onto a single texture. You will need to have multiple textures for large scenes so that the resolution does not get too pixelated.

- Workflow is destructive.

Light Maps

Light maps are a separate texture that only has lighting information. It does not contain the diffuse color of the objects.

Benefits

- Diffuse color and lighting are baked separately, making the workflow more modular. This way you can make changes to your diffuse textures without affecting your light bakes.

Problems

- Objects need a second set of UVs because they need to be unwrapped differently in order to bake the lightmap properly.

- Does not export with .glb or .gltf files (This reason is why I have never personally used this method).

Vertex Baking

Note that in Blender 3.2 and above, it is now referred to as baking to “color attributes”.

Vertex baking stores RGBA values in the vertices themselves rather than referring to an image texture for color data. This means that in the fragment shader that renders the object, you can interpolate between the color attribute stored in the three vertices that make up that fragment. In short, this is much less expensive to render.

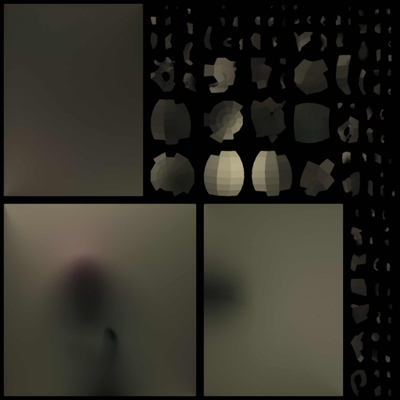

Vertex baking result after exporting to .glb

Benefits

- No UV unwrapping

- No textures to store and load a runtime, making for a very cheap render

Problems

- Depends on geometry (i.e. fewer vertices, less detail. See photo below)

- Does not preserve a lot of detail - only able to blend between the color of the vertices

- Harder to setup - requires a customized shader to render correctly

General Observation

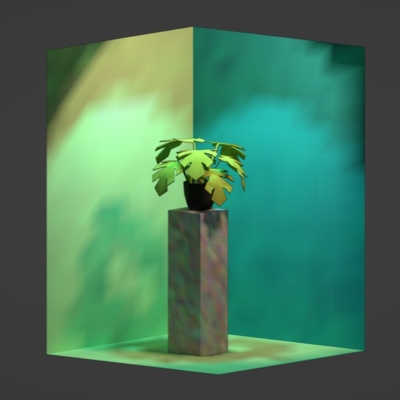

It has a pretty distinctive feel to it. Is almost glowy/ethereal (see Paradowski’s “Above Paradowski” mini-golf game to see a great example of a huge vertex-baked scene. You can also check out Wonderland Engine’s article about vertex baking.

If you look carefully, you can tell that geometry has a great impact on the detail of the bake.

Takeaway

Each method has its pros and cons, and depending on what you’re going for, one method might be better suited to your situation. I’m personally going to be trying out vertex baking to see what kind of results I can achieve.